You need a baseline for your latest time series forecasting project? You want to explain the decision-making process of a predictive model to a business audience? You would like to understand if car prices are seasonal before buying a new one? We might have something for you! This article introduces Streamlit Prophet, a web app to help data scientists train, evaluate and optimize forecasting models in a visual way. Forecasts are made with Prophet, a fast and easily interpretable model.

You can test the app online here but it might not be available at all time, due to limited shared computing resources. Another option is to install the python package and run it locally.

What is Streamlit Prophet?

Streamlit Prophet is a Python package through which you can deploy an app to build time series forecasting models visually and without any coding. Once you have uploaded a dataset with historical values of the signal to be forecasted, the app trains a predictive model in a few clicks, along with several visualizations to help you evaluate its performance and get further insights.

The underlying model is built with Prophet, an open source library developed by Facebook to forecast time series data. The signal is broken down into several components such as trend, seasonalities and holidays effects. The estimator learns how to model each of these blocks separately and then adds up their different contributions to produce an easily interpretable forecast. It performs better when the series have strong seasonal patterns and when several cycles of historical data are available. You can have a look at this thread or this article if you wish to learn more about Prophet’s mathematical foundations.

The interface is made with Streamlit, a Python framework for building data science web apps.

What are the main features?

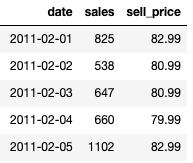

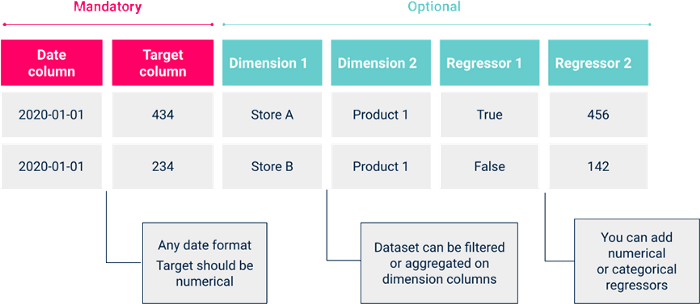

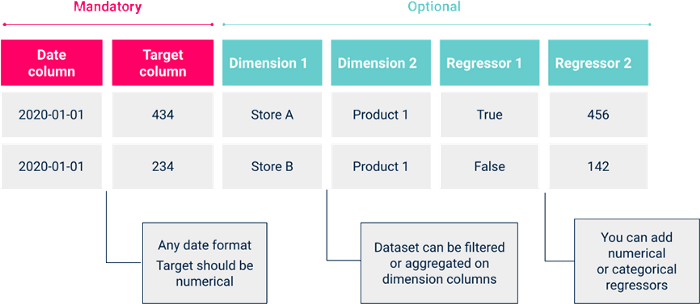

Streamlit Prophet is meant to help data scientists and business analysts get up and running quickly on their time series projects. As an illustration, let’s say that we would like to predict future sales for consumer goods in a particular store, given historical data ranging from 2011 to 2015. Our dataset looks like the table below.

A baseline model with default parameters is fitted on the data as soon as it is uploaded. Now let’s see how we could use Streamlit Prophet to improve it and achieve a better understanding of the phenomenon.

Data exploration

The first step in any forecasting project is to make sure that the dataset has no secrets for you. Prophet natively provides a nice decomposition of the signal to help you achieve that goal. Several charts are available in the app to get these valuable insights at a glance.

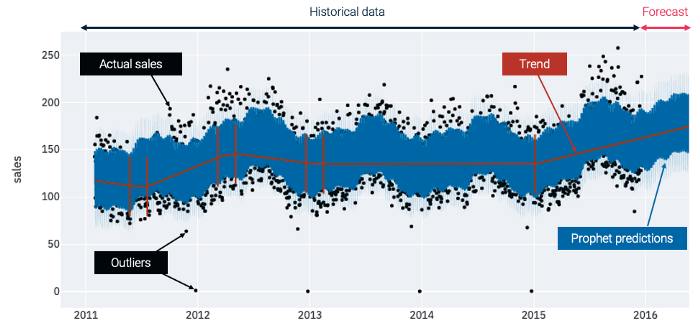

The following graph is a good starting point as it gives a global representation of the uploaded time series and contains a lot of useful information.

The black points are actual historical sales, which are most of the time comprised between 75 and 225 units per day. Some outliers with no sales or with low volumes can be spotted at the end of each year around Christmas, when stores are probably closed. The trend is displayed on a red line to get a more synthetic vision of the signal and visualize global evolutions. Finally, the blue line represents the forecasts made by a Prophet model that is automatically trained on your dataset. Here we can see that the model expects sales to increase in 2016, following the growing trend that started in 2015.

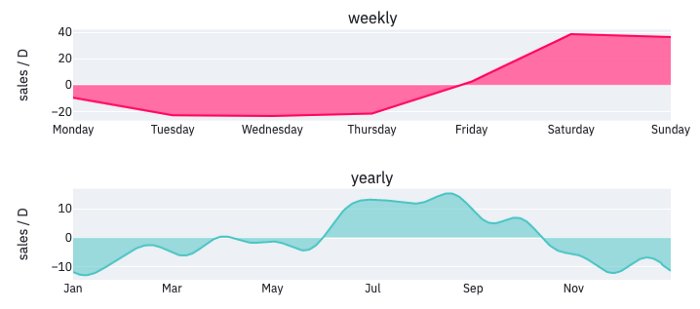

These forecasts seem to be seasonal, but it is hard to distinguish the different periodic components on this first plot. Let’s check another visualization to understand how these seasonal patterns affect the model output.

Two periodicities have been detected and provide some interesting insights about consumers’ habits. The weekly cycle shows that most people shop on the week-ends, during which forecasts are increased by nearly 40 units per day. The graph also suggests that the products sold have a yearly seasonality, with slightly more sales during summer than the rest of the year. These periodic components and the global trend will then be combined by the estimator to produce forecasts for future days.

Performance evaluation

These plots synthesize the way data is modeled by Prophet, but how can we make sure that this representation is reliable? To answer this legitimate question, a section of the app is dedicated to evaluate the model quality. It quickly provides the user with a baseline forecasting performance. The time series is split into several parts in order to do so: the model is first fitted on a training set and then tested on a validation set. Other options like cross-validation are also available for a more advanced usage.

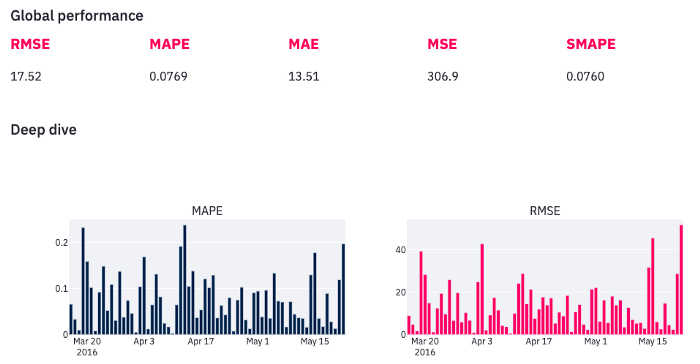

Different metrics can be used to assess the model quality: absolute metrics such as the root mean squared error (RMSE) are helpful to get an idea of the magnitude of errors in terms of number of sales, but relative ones like the mean absolute percentage error (MAPE) might be more interpretable. It is up to you to select the metric that is the most relevant for your use case.

However, performance is unlikely to be uniform over all data points, therefore getting a global indicator is not enough. We should compute metrics at a more detailed granularity to get a clear understanding of the model quality. Let’s start with an in-depth analysis at the daily level, which is the lowest possible granularity in our case, as the model makes one prediction per day.

We can observe an important variability: there are days when the error is bigger than 20% while some other forecasts are almost perfectly accurate. With that information in mind, you probably can’t help but wonder if there are patterns in the way the model makes mistakes. Are there some particular days when we can expect it to perform poorly? Fortunately, the app provides some handy charts that will help us satisfy our curiosity.

Error diagnosis

The error diagnosis section is probably the most useful one, as it allows you to highlight the areas where forecasts could be improved and thus identify more precisely the main challenges you will face to build a reliable forecasting model.

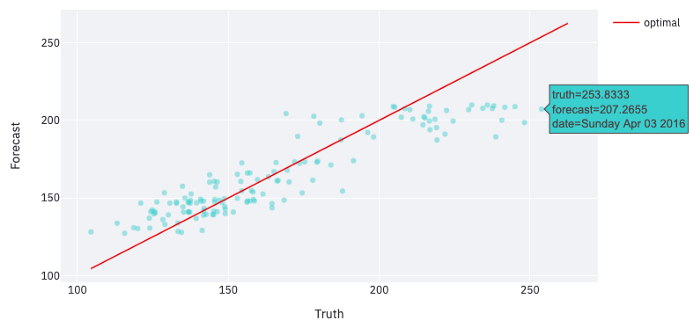

Several visualizations are available to achieve that investigation. They are interactive, so you can easily focus on some particular areas. For example, the scatter plot below represents each forecast made on the validation set by a single point, and hovering over the ones that are distant from the red line helps us understand for what kind of data points forecasts are far from the truth.

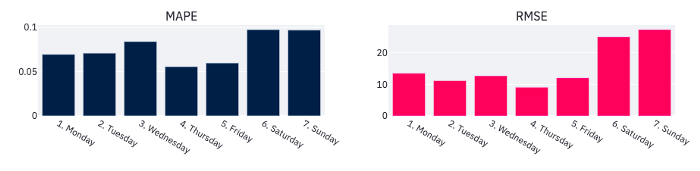

In our example, hovering over the top right area shows that the points furthest from the red line are Saturdays and Sundays, which suggests that the model performs better during the week. Let’s aggregate performance metrics by day of week to validate this intuition.

Errors are indeed bigger on average on the week-ends than during the rest of the week, which is an information to keep in mind when trying to optimize the model. Performance might also evolve over time, therefore it is possible to select other levels of aggregation available in the app to check it out. We could for example compute metrics at a weekly or monthly granularity, or over a specific period of time during which we suspect it to perform differently than usual.

Model optimization

Once we have discovered the model’s main weaknesses, several options are available to improve it: the app’s sidebar allows you to edit the default configuration and enter your own specifications. All performance metrics and visualizations are updated each time you change settings, in order to get quick feedback.

The first way to achieve better performance is to apply some customized pre-processing to your dataset. Several alternatives are possible to get around the challenges identified earlier. For example, a cleaning section allows us to get rid of the outliers observed around Christmas, which might confuse the model. We could also filter out some particular days, and thus easily train distinct models for the week and the week-ends, as they seemed to be associated with different purchasing behaviors. Some other filtering and resampling options are available as well, in case they are relevant to the problem at hand.

Prophet hyper-parameters can also be tuned to help the model better fit the data. These parameters influence how the estimator learns to represent the trend and seasonalities from historical sales, and the relative weight of these components in the global forecast. Don’t worry if you’re not familiar with Prophet models, some tooltips explain the intuition behind each parameter and guide you through the tuning process. In the modeling section, you can also feed the model with external information such as holidays or variables related to the signal to be forecasted (like the products’ selling price for example). These regressors are likely to improve performance as they provide the model with additional knowledge about a phenomenon that impacts sales.

Forecast interpretability

Having an accurate forecasting model is nice, but being able to explain the main factors that contribute to its predictions is even better. The last section of the app aims at helping us understand how the model we have just built makes decisions. There are different ways to address this question: we can either look at a single component and see how its contribution to the overall forecasts evolves over time, or we can take a single forecast and decompose it into the sum of contributions from several components.

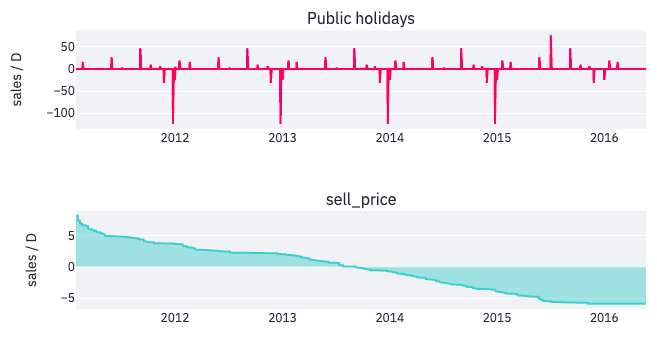

Let’s start with the first option. The different components that influence forecasts are the trend, seasonalities and external regressors. We have already observed the impact of weekly and yearly seasonalities, so let’s focus on the external regressors we have included in the model optimization section: holidays and the products’ selling price.

The impact of some public holidays is quite important: for example, Labor Day increases forecasts by 50 sales every year at the beginning of September, and the dips on Christmas show that the model has taken into account the fact that stores are closing on that day. As for the price, it has increased year after year and therefore its impact on sales has shifted from positive to negative.

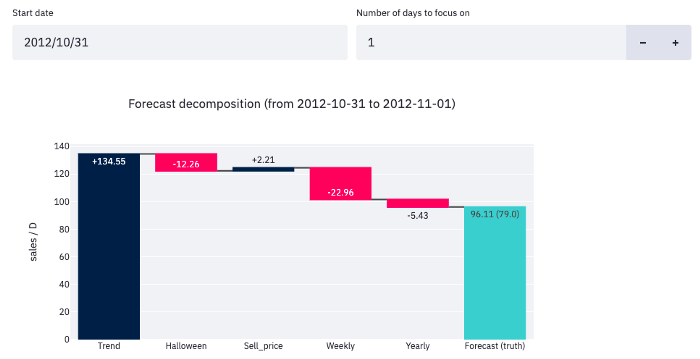

It might also be useful to explain how the model produced one specific forecast, especially when a particular event influences the prediction. The following waterfall chart shows this decomposition for the forecast made on October 31st 2012.

In this example, the model ended up forecasting 96 sales, which is the sum of the contributions from five different components:

That kind of decomposition is not only useful for sharing insights with collaborators, it can also help analysts understand why their model doesn’t perform as expected. If needed, several parameters are available in the app’s sidebar to increase or decrease the different components’ relative weights.

How to get started?

Running the app on your own computer is pretty straightforward. The only prerequisite is tohave Python installed. Some more requirements are needed for Windows users (see repository for more details). Then, you can follow the instructions below to get started.

Installation

We recommend creating a new virtual environment to avoid dependencies issues or incompatibility with your current one. Once your new environment is activated, you can install the package with the following command. The installation can take a few minutes (5–10).

Run

Now that the package has been installed, a single command allows you to launch the app from your terminal and open it in your default web browser.

And you are ready to build Prophet models! In order to start modeling, you first need to upload your dataset as a csv file with the following format.

Then, you can provide your specifications in the sidebar to perform the pre-processing tasks that meet your needs and tune the model hyper-parameters. Once you are happy with the results, save your experiment to keep all visualizations and be able to reproduce it easily afterwards.

Cloud deployment

If you wish to make the app easily accessible to several collaborators without making them download Python and install the package, you can deploy the app on the cloud. The first thing you need to do is to clone the git repository. Then, a Docker command allows you to easily containerize the application and create an image that can be used to deploy the app on the cloud platform of your choice. This article explains in details how to do so on Google Cloud Platform.

Thanks a lot for reading, I would be glad to hear your feedback. Please feel free to reach out if you wish to contribute to the package development or have any improvement ideas. In the meantime, you can visit the project repository to watch a short demo and Artefact tech blog for more information about our data science projects.

Interested in Data Consulting | Data & Digital Marketing | Digital Commerce ?

Read our monthly newsletter to get actionable advice, insights, business cases, from all our data experts around the world!

BLOG

BLOG