NEWS / AI TECHNOLOGY

With the cloud, users are offered abstract Infrastructure as a Service (IaaS*) rather than finite resources they can buy, known as on-premise infrastructure. The cloud can also offer an application (PaaS*: Platform as a Service) or a function (FaaS*: Function As A Service).

The need for abstraction

With the cloud, users are offered abstract Infrastructure as a Service (IaaS*) rather than finite resources they can buy, known as on-premise infrastructure. The cloud can also offer an application (PaaS*: Platform as a Service) or a function (FaaS*: Function As A Service).

For example, compare the purchase of a car with the use of a taxi.

Buying a car means knowing in advance the resources you need, including engine power, size, options, etc.

Taking a taxi is much more flexible. There’s no maintenance, it’s available on demand within a few minutes and it gives several standing options.

In the cloud, machine maintenance (no maintenance), the creation of virtual machines on demand (availability in seconds/ minutes), and the choice of applications (choice of options) can be transparent for the user.

Thanks to the flexibility of the “on-demand” offered by the cloud, AI can answer the needs of often unpredictable resources through the use of “processing” erratic algorithms. In this way, cloud computing focuses more on the problem than on the tools needed to respond.

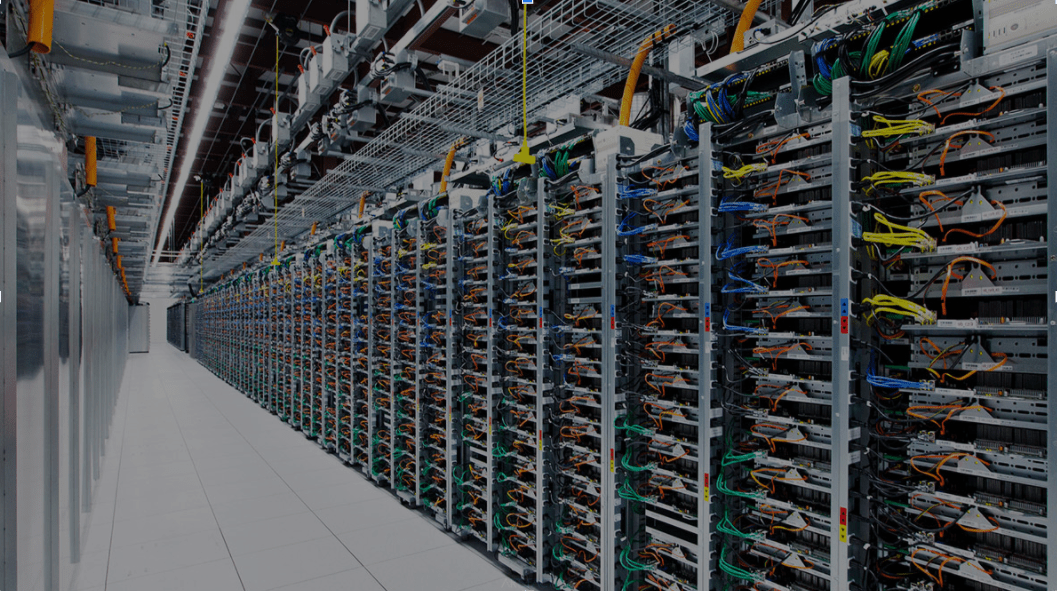

The need for power of machines

AI needs power. AI leaders who are also leading cloud providers have invested in proprietary technologies to win the race for the best computing power.

Google is developing its own chips called TPUs* (Tensor Processing Units), built with the sole purpose of accelerating deep learning calculations on TensorFlow*, its dedicated open-source framework*. The third generation of TPUs, the TPUv3 pod, reaches computer speeds of hundreds of petaflops*.

Before Google invented TPUs, CPUs* (Central Processing Units) were used for all IT program operations (logic, arithmetic, etc.). The GPUs* (Graphics Processing Units) are chips developed initially for graphical needs (e.g. pixel displays on screen). With increased cores, they can very effectively parallel calculations. (The detection of this specific capacity dates back to 2009: GPUs are 70 times more powerful than CPUs).

The need for cost optimisation

The cloud offers the flexibility to choose the level of abstraction from IaaS and PaaS to FaaS, which allows businesses to maximise value creation and drive cost reduction.

On-premise the capacity of the machines and their provision is thought out upstream and fixed for a certain period of time.

On the cloud side, the teams have the flexibility to choose the model that suits them:

- ‘On-premise’ cloud: the team rents a set number of machines over the long term from a cloud provider.

- ‘On demand’ cloud: the data teams can freely increase or reduce the resources according to their need, via code or via a click-button interface (fully managed).

- ‘Serverless’ cloud: the sizing of the machines is automatically produced to stick as closely as possible to the real request, the granularity of a request, a job, etc. (e.g. Dataflow)

The need to update AI

Only the cloud allows the scalability and updating of AI. An AI project is always followed by a maintenance and continuous improvement phase, where AI will progressively evolve to perfect itself. This process requires a perpetual updating of AI (integration of new data, refinement of the parameters of the algorithm, versioning etc.).

The cloud allows infrastructure establishment that is always compatible with the latest AI innovations, limiting the technological debt created by initial development choices.

The need for collaboration

The cloud is a great vehicle for AI collaboration. The major players have created marketplaces, like the Google Cloud Platform, where users can use or publish tools and algorithms for free, or payment.

This is also demonstrated by the contributions made by the big stakeholders in the cloud and their communities of data scientists and data engineers.

The cloud is an intrinsically communal space. It’s not the pitting of one technology against another; it’s a whole world. We cannot oppose on-premise in the cloud, because the users of on-premise participate in the cloud communities that spearhead innovation.

BLOG

BLOG