For years, marketers have invested in better measurement. They have built dashboards, adopted attribution models, and implemented marketing mix modeling to understand what is working. Yet even with these capabilities in place, a familiar problem remains: insights do not automatically lead to action.

That gap still exists because most organizations are not struggling to generate data. They are struggling to operationalize it. Insights often sit inside dashboards waiting for analysts to interpret them, translate them into recommendations, and move them through internal approval processes. By the time action is taken, the moment may already have passed.

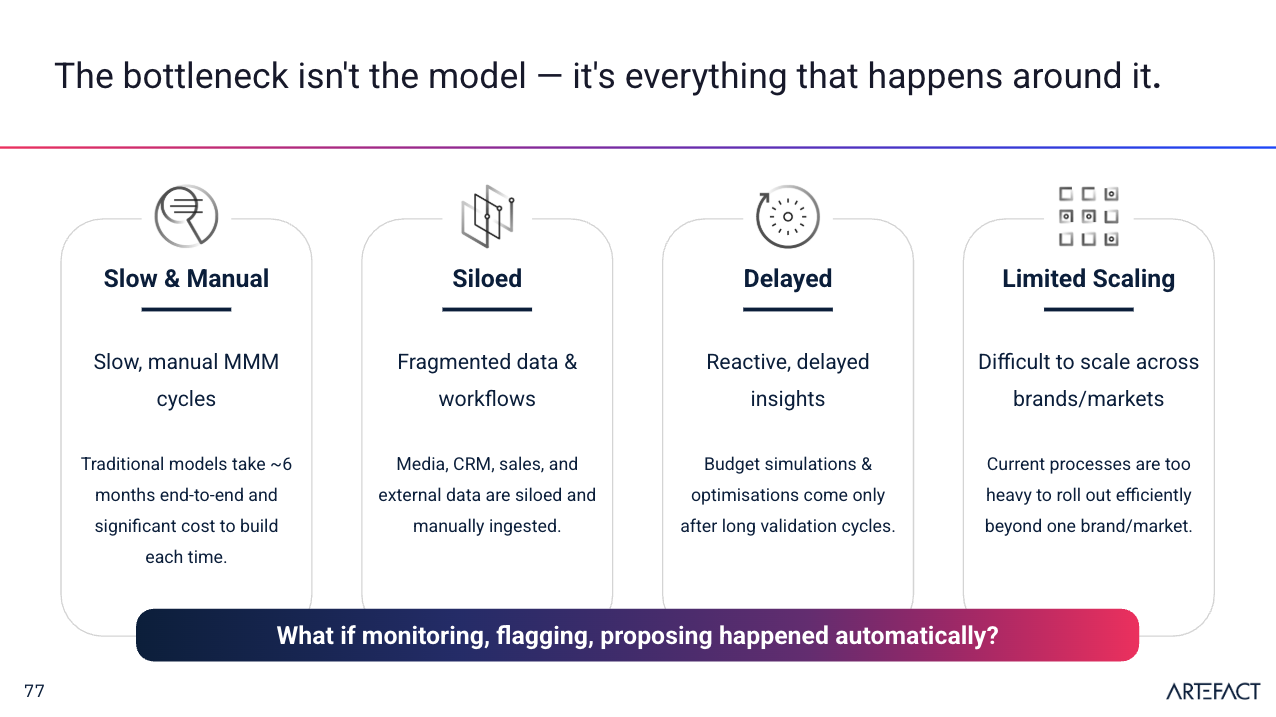

There are several reasons this happens. First, implementation takes time. A full marketing mix modeling setup is not a quick win. It requires deployment, data engineering, model refreshes, and governance. In many cases, that takes months. In a fast-moving market, six months is long enough for channel performance, consumer behavior, and competitive conditions to shift significantly.

Second, organizations are still fragmented. Brand, performance, sales, finance, and analytics teams often work across disconnected systems and inconsistent views of the data. Before any recommendation can be acted on, teams may need to align internally on what the numbers actually mean.

Third, execution is still human-heavy. Even when a model suggests an optimal budget shift, someone still needs to validate it, get approval, and implement it in the right platforms. That slows the loop between insight and action.

And finally, scale remains difficult. A measurement approach that works in one country or business unit does not automatically scale cleanly across dozens of markets, each with different channels, constraints, and business dynamics.

That is why the demo on agentic AI in marketing measurement was so relevant. It explored what happens when measurement is no longer treated as a reporting layer, but as part of an operational system that can help users move from insight to next step faster.

This blog includes a link to the recording of the demo for anyone who wants to watch the full session.

Why agentic AI matters here

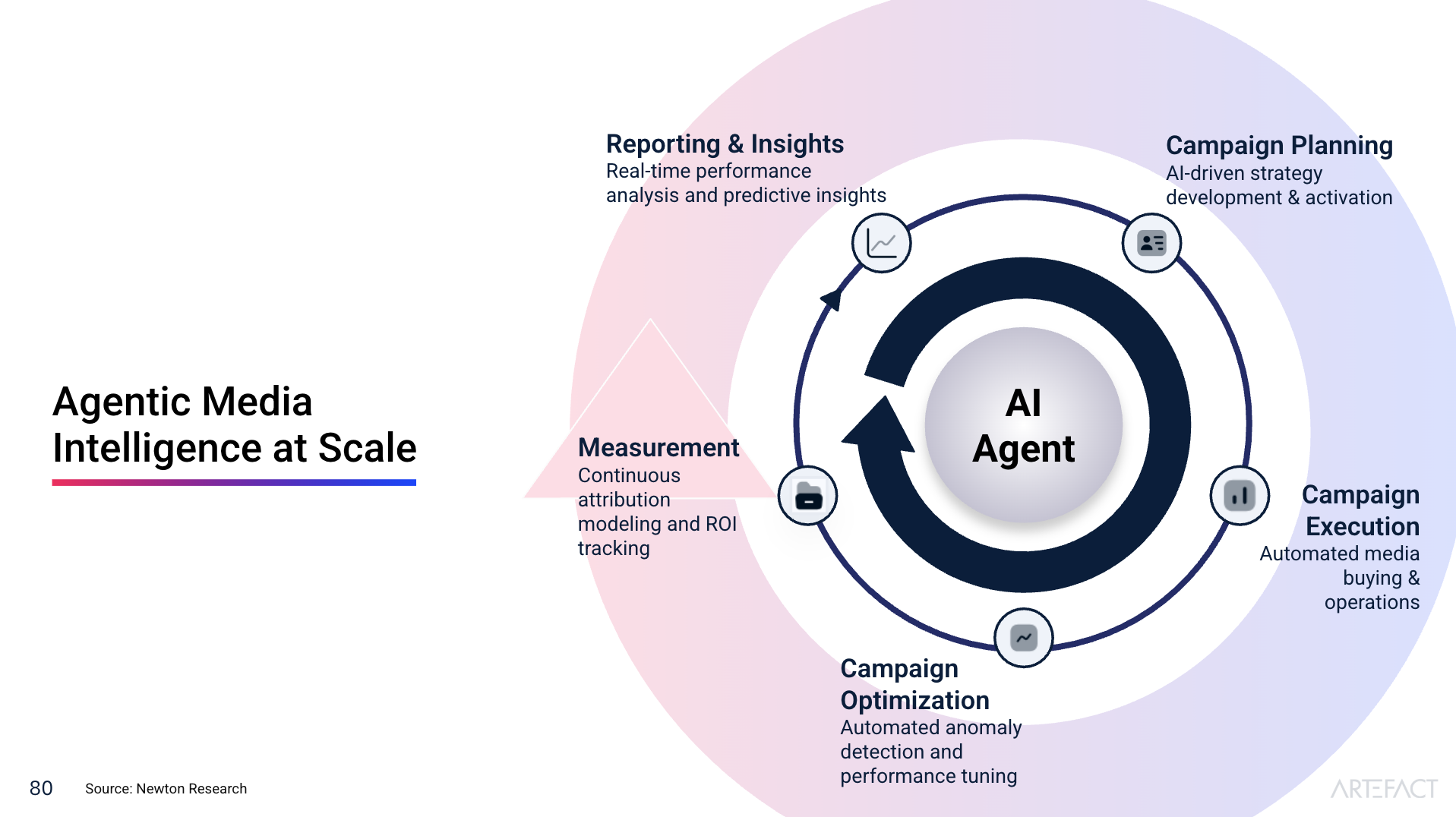

The core idea presented in the session was simple but important. Generative AI helps create outputs. Agentic AI goes further by helping carry out tasks.

In a measurement context, that means AI does not just summarize results from a model. It can flag issues, recommend follow-up actions, run checks, trigger experiments, and in some cases help implement decisions across connected systems. The marketer stays in control, but the system takes on more of the repetitive and operational work that usually slows progress.

Demo section 1: Interpreting MMM outputs

A live example of how an agentic layer can sit on top of an MMM environment and do more than simply display results.

The demo began with a familiar MMM-style interface showing contribution analysis, baseline versus media impact, and channel ROI. But the key difference was that the system was not just presenting numbers. It was interpreting them.

One example involved an ROI estimate for online audio. The system detected that the credibility interval around that result was high and surfaced an alert. Instead of leaving the user with uncertainty, it translated that into a recommendation: validate the finding through a GeoX test. That was a strong illustration of the shift from passive insight to guided action.

Demo section 2: Natural language insight exploration

An insight agent showed how users can query measurement data conversationally instead of relying only on fixed dashboards.

The presenters then demonstrated an insight agent that could answer natural language questions and create new views of the data on demand. In the example, the user asked the system to plot Meta media impressions for 2025 and comment on the trend.

This matters because it changes how marketers interact with measurement. Rather than waiting for analysts to build custom views, users can ask questions directly, receive a visualization, and get a first layer of commentary immediately.

Demo section 3: Budget optimization and recommendation validation

The system moved from optimization output to an actionable budget recommendation, with supporting rationale and external validation.

Another part of the demo focused on budget optimization. With MMM running in the background, the system evaluated different planning scenarios and recommended a media rebalance for the coming month. The suggested move was to reduce spend in saturated digital channels and shift investment toward stronger-performing offline channels such as TV and radio.

Importantly, this was not shown as a black box. The user could inspect the opportunity further and trigger deeper research to compare the recommendation against broader industry benchmarks. That added a valuable layer of transparency before action was taken.

Demo section 4: Applying recommendations directly

Once approved, recommendations could be pushed into execution platforms through APIs, reducing the delay between analysis and activation.

One of the strongest moments in the demo came when the recommendation was accepted and applied. The system showed how budget changes could be sent directly into connected media platforms through APIs.

That is where the promise of agentic AI becomes especially tangible: not just smarter measurement, but faster operational follow-through.

Demo section 5: Model retraining and data quality checks

The demo showed how agents can monitor model freshness, detect data issues, suggest fixes, and support retraining workflows.

The presenters also demonstrated how the system handled MMM retraining. Before refreshing the model, the agent automatically ran data quality checks on the new dataset, surfaced issues, and proposed remedies.

This is highly practical. Retraining is one of the most important but operationally tedious parts of MMM maintenance. By making that workflow more supervised and less manual, the system reduces friction while protecting trust in the model.

Demo section 6: Turning uncertainty into experimentation

An agent-generated alert triggered the design and launch of a GeoX test to validate uncertain channel performance.

The final major section of the demo returned to the earlier online audio alert. Because the model showed uncertainty around ROI, the agent recommended a GeoX test. It then helped design the experiment, create region pairings, suggest budget and duration, and connect implementation into the activation platform.

The system even identified a conflict with existing national campaigns and prompted the user to resolve it before launch. That showed that the workflow was not simply automated. It was connected, aware, and designed to support better decisions in context.

What this event made clear about the future of measurement

What this event made clear is that the future of measurement is not only about improving models. It is about reducing the distance between insight and execution.

MMM, experimentation, and attribution are still critical. But on their own, they do not solve the operational lag that exists inside many organizations. Agentic AI offers a way to make those capabilities more usable, more connected, and more actionable.

The most compelling takeaway was not that AI will replace marketers. It was that marketers may soon work inside systems that help them respond faster, validate more confidently, and spend less time on manual translation between analysis and action.

In that sense, the future of measurement looks less like a static dashboard and more like an intelligent operating layer around the entire workflow. And that could be the real shift: not just better insight, but measurement that is finally built to move.

Curious how agentic AI could fit into your marketing measurement? Contact us to explore next steps.

BLOG

BLOG