Le domaine de l'ingénierie data évolue rapidement. Cet article décrit trois grandes tendances que je vois se dessiner dans les années à venir.

Le rôle d'un ingénieur data était pratiquement inexistant il y a dix ans. Mais le besoin de ce type particulier d'ingénieur logiciel s'est accru. Au fur et à mesure que le domaine est devenu plus mature, le rôle a évolué.

Les responsabilités d'un ingénieur data varient d'une entreprise à l'autre et le rôle n'évolue pas au même rythme partout. Mais je vois le rôle changer sous trois aspects :

Entrons dans les détails.

Les ingénieurs Data tireront massivement parti des technologies cloud et des produits SaaS.

Il y a dix ans, les entreprises s'appuyaient sur une infrastructure sur site pour stocker leurs data. C'est pourquoi les premières grandes technologies data ont été conçues pour des environnements sur site. À cette époque, les ingénieurs data passaient beaucoup de temps à régler la configuration de leurs machines au détriment de la création de valeur commerciale.

Ensuite, Les fournisseurs de cloud ont promis de fournir des services qu'ils gèrent pour vous.. Vous pouvez ainsi vous concentrer sur les besoins de votre entreprise. Cela a changé la donne.

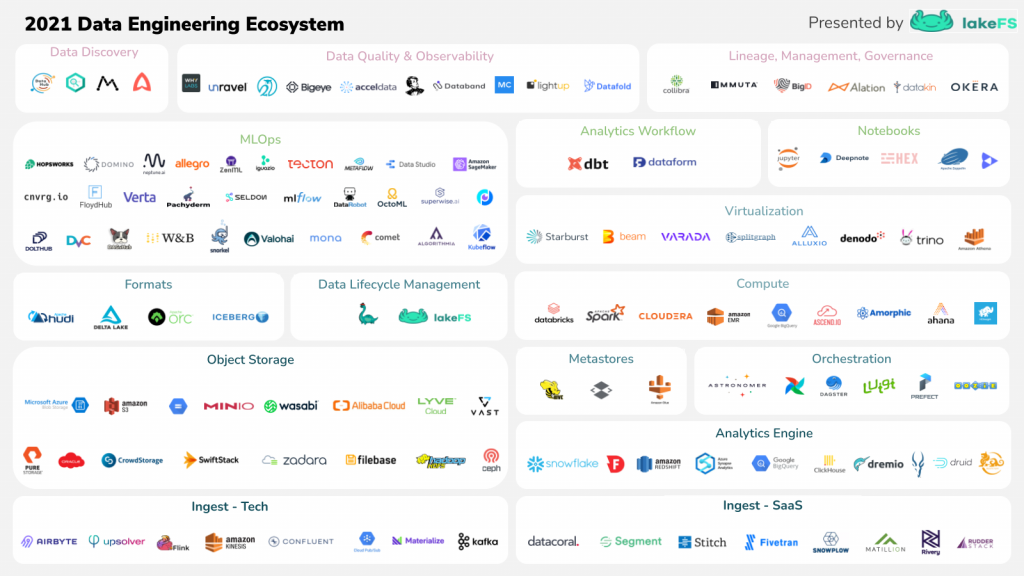

Aujourd'hui, les fournisseurs de cloud et les entreprises technologiques telles que Snowflake et Databricks ont facilité la mise en place de data de grande envergure. L'écosystème data est également devenu plus mature. De nouvelles start-ups data sont apparues dans des domaines spécifiques tels que la qualité data, le data governance ou l'ingestion data. L'intégration entre ces produits est transparente.

L'époque où les ingénieurs de data disposaient d'un outil de la Fondation Apache pour répondre à leurs besoins spécifiques est révolue. Ils disposent d'une myriade d'outils pour faire la même chose. Aujourd'hui, les ingénieurs de data ont la responsabilité de choisir les meilleurs outils. Par conséquent, ils doivent avoir une bonne connaissance de l'écosystème et savoir comment effectuer des analyses comparatives et choisir des critères de décision pertinents.

Il n'est pas facile de choisir le bon outil pour le bon travail. Mais intégrer des outils pour former une data platform cohérente est également un défi. Certains ingénieurs data exploitent déjà l'infrastructure en tant que code pour assembler ces briques et automatiser le déploiement de l'infrastructure. Je pense que cela deviendra une compétence obligatoire.

Les ingénieurs de Data passeront moins de temps à coder et plus de temps à contrôler.

L'époque où les ingénieurs de data développaient des pipelines ETL complexes en Scala et Spark semble révolue.

Pour l'extraction, vous pouvez désormais utiliser des technologies comme Airbyte pour programmer des tâches d'extraction à partir d'un grand nombre de sources différentes. Le chargement n'est plus un problème. Snowflake, par exemple, a facilité le chargement d'un fichier à partir d'un stockage blob dans une table en une seule commande SQL.

En ce qui concerne l'étape de la transformation, la dbt a apporté un nouveau paradigme dans lequel vous transformez votre data dans votre entrepôt data utilisant SQL comme langage principal. Les le passage de l'ETL à l'ELT est terminé.

Ainsi, le déploiement d'un flux de travail n'a jamais été aussi facile et nous pouvons dire que merci à la pile moderne de data. La pile moderne de data est un ensemble de technologies visant à réduire la complexité des flux de travail de data et à augmenter la vélocité de l'équipe de data. Grâce à la pile data moderne, les analystes data peuvent désormais être autonomes. Ils n'ont plus besoin de l'aide des ingénieurs data pour collecter et transformer les données brutes data. Mais cela signifie-t-il que les ingénieurs data ne sont plus nécessaires dans les équipes data ? 😟

Je suis peut-être partial, mais je pense que la réponse est NON.

Je pense que la le rôle de l'ingénieur data évoluera vers un rôle plus orienté vers les opérations. La prochaine génération d'ingénieurs data se concentrera sur l'amélioration de la fiabilité du data dans l'ensemble de l'entreprise. Leurs responsabilités seront les suivantes :

À l'instar de ce que nous avons observé dans le domaine du développement de logiciels il y a quelques années avec la montée en puissance des ingénieurs en fiabilité logicielle (SRE), nous pourrions assister à une tendance similaire dans le monde de la data. Un nouveau titre de poste apparaîtra : l'ingénieur de fiabilité data. Ils seront chargés de s'assurer que data est disponible à temps et qu'il est digne de confiance.

Nous verrons davantage d'ingénieurs data chargés de définir les indicateurs de niveau de service (SLI) et les objectifs de niveau de service (SLO). Ils participeront également aux rotations de garde et répondront aux incidents.

Le quotidien d'un ingénieur data évoluera, mais sa position au sein de l'organisation changera également.

Les ingénieurs de Data changeront d'équipe, passant d'une équipe de fonctionnalités à une équipe de base.

Historiquement, les ingénieurs de data étaient membres d'équipes de développement. Le problème est que cela a conduit à des silos data et à un manque de cohérence globale. C'est pourquoi les entreprises ont commencé à s'adapter en créant des équipes transversales.

La prochaine génération d'ingénieurs data ne travaillera pas sur un produit data particulier. Leur objectif sera de rendre les équipes de production plus productives. Pour ce faire, ils devront fournir l'ensemble des outils adéquats. C'est la raison d'être du paradigme de la maille data : la propriété distribuée avec une équipe de base qui fournit tous les outils nécessaires pour fabriquer des produits data.

Ainsi, la prochaine fois que vous aurez besoin de développer un tableau de bord pour le reports financier, vous n'aurez pas besoin d'une équipe composée d'un propriétaire de produit, d'un analyste data et d'un ingénieur data. L'analyste data sera autonome et exploitera les outils déployés par l'équipe de base, ce qui lui permettra d'extraire rapidement les data nécessaires et de calculer les indicateurs de performance clés à partir de ces data brutes.

Conclusion

Regarder le bol de cristal est un exercice difficile. Les opinions exprimées ci-dessus comportent une part d'incertitude. Mais j'espère que cet article vous fera réfléchir à l'avenir de la fonction et je serais heureux de lire vos réflexions dans les commentaires !

Il est temps de mettre mon bol de cristal de côté pour un moment et de vous inviter à consulter notre site web. postes ouverts. Artefact est l'endroit idéal pour réfléchir à l'avenir de notre domaine.

BLOG

BLOG