Auteur

Auteur

Auteur

In het huidige digitale tijdperk staan organisaties voor de uitdaging om gelijke tred te houden met het ongekende tempo waarin data en met de overvloed aan bedrijfssystemen en digitale technologieën die allerlei soorten data verzamelen. Daarbij komt nog de noodzaak om deze enorme hoeveelheden data snel en efficiënt te analyseren data inzichten en informatie data genereren en de bedrijfswaarde ervan te maximaliseren. Daardoor zijn data een essentiële basis geworden voor organisaties om op efficiënte wijze data in te zetten die zorgen voor tijdige, data zakelijke beslissingen en concurrentievoordeel.

“OplossingenData en -inzichten vinden in steeds meer organisaties hun weg om bedrijfsgroei mogelijk te maken. Organisaties moeten big data opzetten als solide basis om data op grote schaal te kunnen implementeren. Deze data moeten specifiek voor zakelijke doeleinden worden ontworpen, aangezien ze slechts zo goed zijn als de zakelijke inzichten en informatie die ze opleveren; bovendien moeten ze toekomstbestendig zijn, zodat ze kunnen profiteren van de voortdurende vooruitgang op het gebied van data en -technologieën.”Oussama Ahmad, partner Data bij Artefact

Belangrijkste doelstellingen van het big Data

data zijn bedoeld om data te doorbreken en de verschillende soorten data te integreren die nodig zijn voor de implementatie van geavanceerde oplossingen data en -inzichten. Ze bieden een schaalbare en flexibele infrastructuur voor het verzamelen, opslaan en analyseren van grote hoeveelheden data meerdere bronnen. Deze platforms moeten gebruikmaken van toonaangevende diensten en technologieën data en voldoen aan drie belangrijke doelstellingen:

Infrastructuur van het big Data

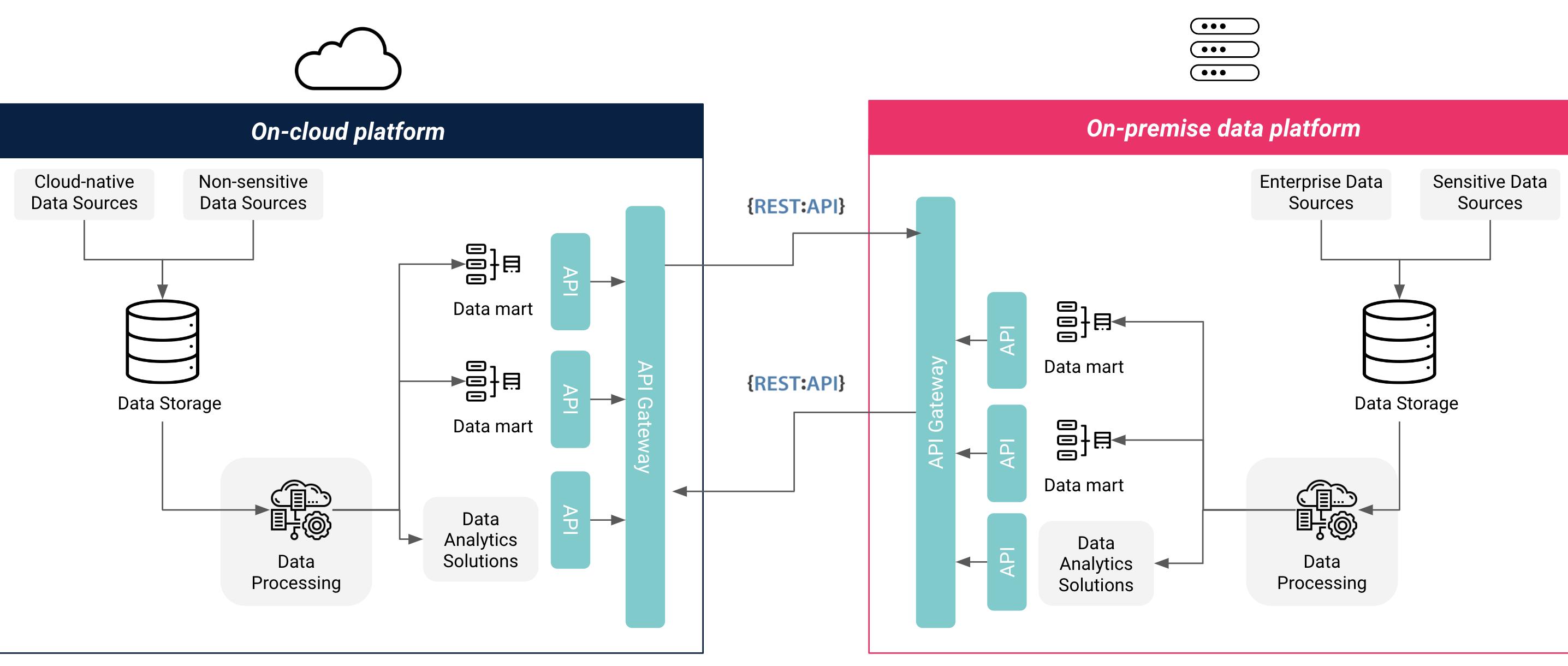

Er zijn verschillende infrastructuuropties voor een data : volledig on-premise, volledig cloud een hybride combinatie van cloud, elk met hun eigen voordelen en uitdagingen. Organisaties moeten bij het kiezen van de meest geschikte infrastructuuroptie voor hun big data rekening houden met een aantal factoren, waaronder vereisten data en gegevensopslag, integratie data , vereisten inzake functionaliteit en schaalbaarheid, en kosten en tijd. Een volledig cloud architectuur services en beter voorspelbare kosten, kant-en-klare diensten en integraties, en snelle schaalbaarheid, maar biedt geen controle over de hardware en voldoet mogelijk niet aan de regelgeving data en -opslag. Een volledig on-premise architectuur biedt volledige controle over de hardware en data , voldoet doorgaans aan de regelgeving inzake privacy en gegevensopslag, maar brengt hogere kosten met zich mee en vereist langetermijnplanning voor schaalbaarheid. Een hybride cloud-architectuur services beste van beide werelden en maakt volledige migratie naar de cloud een later tijdstip mogelijk, maar vereist mogelijk een complexere opzet.

Veel organisaties kiezen voor een hybride infrastructuur voor hun data omdat ze uit organisatorische overwegingen zeer gevoelige data zoals klant- en financiële data) op hun eigen servers moeten bewaren, of omdat er geen door de overheid gecertificeerde cloud (CSP’s) zijn die voldoen aan de lokale eisen data en gegevensopslag. Deze organisaties geven er ook de voorkeur aan om cloud of niet-gevoelige data in de cloud te houden cloud de kosten voor opslag en rekenkracht te optimaliseren en te profiteren van kant-en-klare data en machine learning-diensten die beschikbaar zijn bij CSP's. Andere organisaties die geen organisatorische of wettelijke vereisten hebben voor data binnen de organisatie het land, kiezen voor een volledig cloud infrastructuur vanwege de snellere implementatietijd, geoptimaliseerde kosten en eenvoudig schaalbare resources.

Figuur 1: Infrastructuur voor hybride Cloud on-premise Data

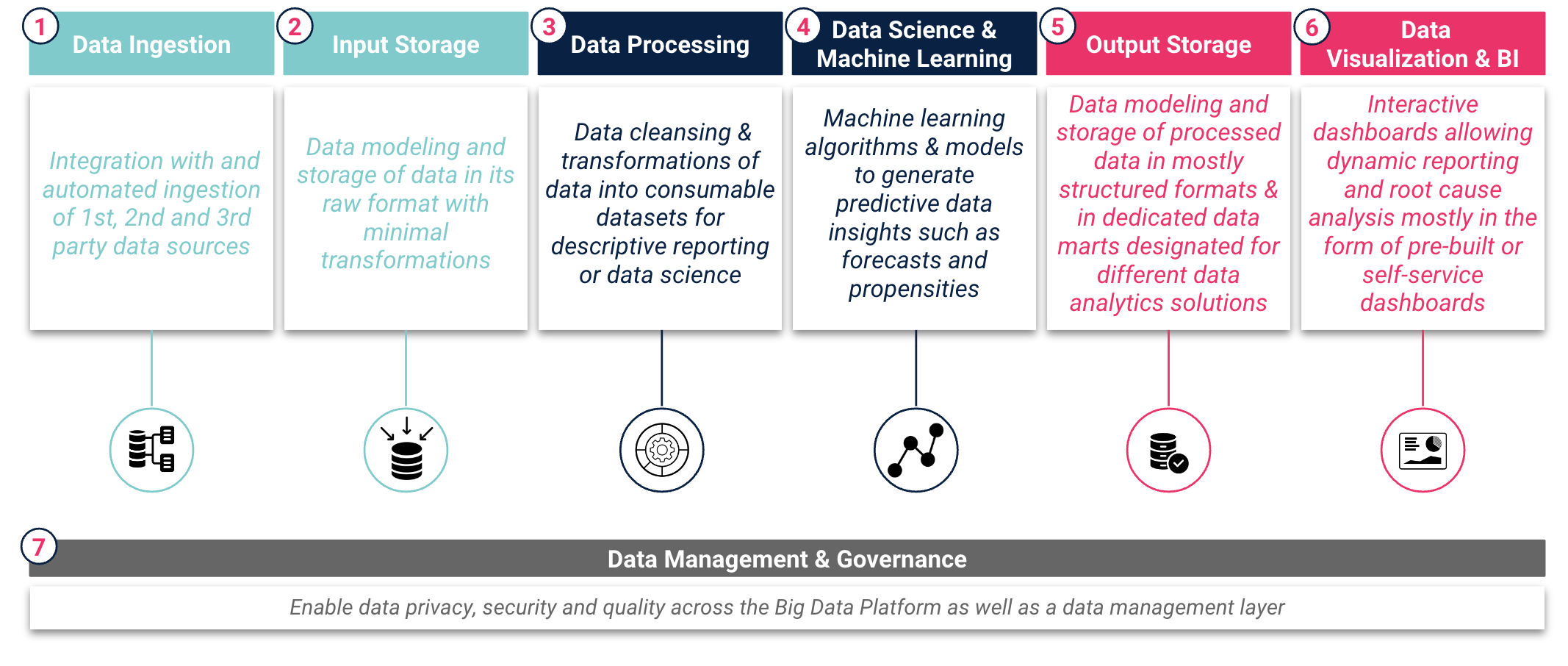

Een data bestaat doorgaans uit zeven hoofdlagen die de data weerspiegelen, van „ruwe datatot „informatie“ en „inzichten“. Organisaties moeten zorgvuldig overwegen welke diensten en tools voor elke laag nodig zijn om een naadloze gegevensstroom en een efficiënte generatie van data te waarborgen. Deze diensten en tools moeten in elke laag van het data belangrijke functies vervullen, zoals weergegeven in figuur 2: Data Data .

Figuur 2: Data van Data big Data

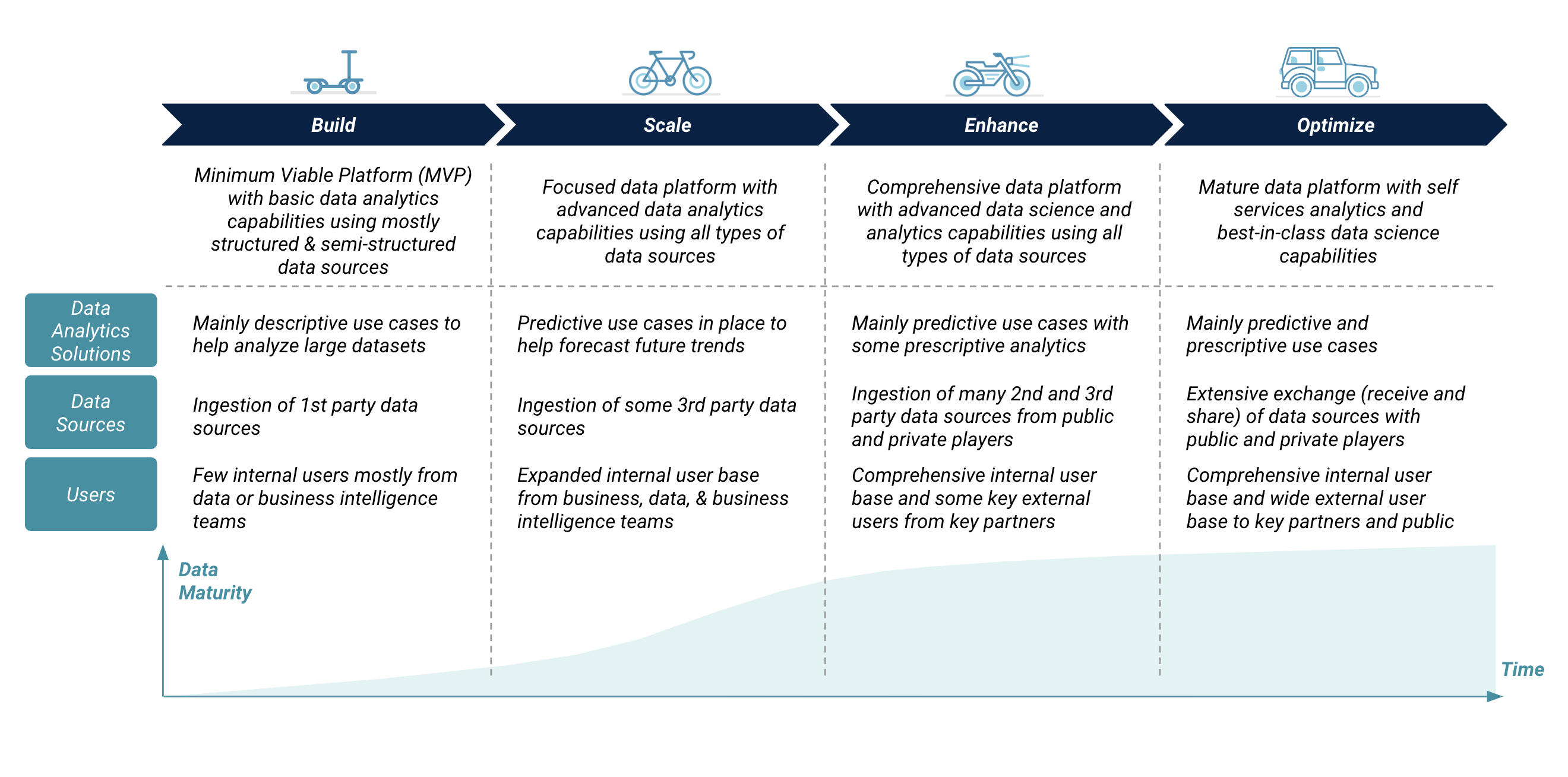

De ontwikkeling van het big Data

De ontwikkeling van een big data moet in verschillende fasen verlopen, te beginnen met een minimum viable platform (MVP) en gevolgd door stapsgewijze upgrades. Een organisatie moet de ontwikkeling van haar data afstemmen op de toegenomen behoefte aan bredere en snellere data en -informatie voor zakelijke beslissingen. Deze toegenomen behoeften beïnvloeden de complexiteit van het data wat betreft data , de omvang en soorten data , en interne en externe gebruikers. De ontwikkeling van het data omvat de toevoeging van meer opslag- en rekenkracht, geavanceerde functies en functionaliteit, en verbeteringen in de beveiliging en het beheer van het platform.

Figuur 3: De ontwikkeling van big Data

“We hebben gezien dat veel organisaties de neiging hebben om vanaf het begin big data te bouwen met geavanceerde en overbodige functies, wat de technologische eigendomskosten opdrijft. De implementatie data moet beginnen met een minimaal levensvatbaar platform en zich vervolgens ontwikkelen op basis van zakelijke en technologische vereisten. In de vroege fasen van de platformontwikkeling moeten organisaties een robuuste laag data en -beheer implementeren die data , privacy, beveiliging en naleving van lokale en regionale data waarborgt.”Anthony Cassab, directeur Data bij Artefact

Richtlijnen voor een toekomstbestendig big Data

Een big data moet worden gebouwd volgens belangrijke architectonische richtlijnen om ervoor te zorgen dat het toekomstbestendig is, zodat het eenvoudig kan worden geschaald, kan worden overgezet naar verschillende on-premise- en cloud , diensten kunnen worden geüpgraded en vervangen, en mechanismen voor data en delen data kunnen worden uitgebreid.

“Een flexibel en modulair platform dat mee kan groeien met de veranderende bedrijfsbehoeften verdient de voorkeur boven een ‘black box’-platform dat weliswaar goed geïntegreerd is, maar slechts beperkte aanpassingsmogelijkheden biedt. Deze platformarchitecturen kunnen geheel of gedeeltelijk in de cloud worden gebouwd cloud profiteren van de voordelen van cloud , zoals schaalbaarheid en kostenefficiëntie, terwijl tegelijkertijd wordt voldaan aan de privacy- en beveiligingseisen van de regelgeving data .”Faisal Najmuddin, manager Data bij Artefact

Kortom, een data biedt organisaties tal van voordelen, zoals het centraliseren data , het mogelijk maken van geavanceerde oplossingen data en het bieden van bedrijfsbrede toegang tot oplossingen en bronnen data . De implementatie van een data brengt echter een aantal strategische beslissingen met zich mee, zoals het kiezen van de juiste infrastructuur(en), het toepassen van een toekomstbestendige architectuur, het selecteren van standaard en 'migreerbare' diensten, het zorgvuldig overwegen van regelgeving data en ten slotte het definiëren van een optimaal evolutieplan dat nauw aansluit bij de bedrijfsbehoeften en het rendement op data maximaliseert.

BLOG

BLOG