NEWS / AI TECHNOLOGY

25 November 2020

Call centers advisors are starting to see NLU emerging in their day to day lives, helping them answering customers’ requests more easily. For a tool to do that, it must be able to recognize at the same time the customer request and its characteristics, in other words, an intent and named-entities.

“OK Google, play the Rolling Stones on Spotify.”, “Alexa, what is the weather like in Paris today?”, “Siri, who is the French president?”

If you have ever used vocal assistants, you have indirectly used some Natural Language Understanding (NLU) processes. The same logic applies to chatbot assistants or automated routing of tickets in customer services. For some time now, NLU has been part of our everyday life and it’s probably not about to stop.

Automating the extraction of customer intent, for example, NLU can help us answer our clients’ requests faster and more accurately. That is why every large company has embarked on the development of its own solution. Yet, with all the libraries and models existing in the NLU field, all claiming state-of-the-art or easy-to-get results, it is sometimes complicated to find one’s way around. Having experimented with various libraries in our NLU projects at Artefact, we wanted to share our results and help you get a better understanding of the current tools in NLU.

What is NLU ?

Natural Language Understanding (NLU) is defined by Gartner as “the comprehension by computers of the structure and meaning of human language (e.g., English, Spanish, Japanese), allowing users to interact with the computer using natural sentences”. In other words, NLU is a subdomain of artificial intelligence that enables the interpretation of text by analysing it, converting it into computer language and producing an output in an understandable form for humans.

If you look closely at how chatbots and virtual assistants work, from your request to their answer, NLU is one layer extracting your main intent and any information important to the machine so that it can answer your request best. Say you call your favorite brand customer service to know if your dream bag is finally available in your city: NLU will tell the assistant you have a product availability request and look for the particular item in the product database to find out if it is available at your desired location. Thanks to NLU, we have extracted an intent, a product name and a location.

(Above: llustration of a customer intent and several entities that are extracted from conversation)

Natural language is instilled in most companies’ data and, with the recent breakthroughs in this field, considering the democratisation of the NLU algorithms, the access to more computing power & more data, a lot of NLU projects have been launched. Let’s look at one of them.

Project presentation

A typical project using NLU is, as mentioned before, helping call centre advisors answering customers’ requests more easily as the conversation goes. This would require us to perform two different tasks:

- Understand the customer’s intent during the call (i.e. text classification)

- Catch the important elements that would make it possible to answer the customer’s request (i.e. named-entity recognition), for example contract numbers, product type, product color, etc.

When we first looked at the simple and off-the-shelf solutions released for both of these tasks we were able to find more than a dozen frameworks, some developed by the GAFAM, some by open-source platform contributors. Impossible to know which one to choose for our use case, how each one of them performs on a concrete project and real data, here call centre audio conversations transcribed into text. That is why we have decided to share our performance benchmark with some tips as well as pros and cons for each solution that we have tested.

It is important to note that this benchmark has been done with English data and transcribed speech text and therefore can be used less as a reference for other languages or applications directly using written text, e.g. chatbot use cases.

Benchmark

Intent Detection

The goal here is to be able to detect what the customer wants, his/her intent. Given a sentence, the model has to be able to classify it into the right class, each class corresponding to a predefined intent. When there are multiple classes, it is called a multi-class classification task. For example, an intent can be “wantsToPurchaseProduct” or “isLookingForInformation”. In our case, we had defined 5 different intents and the six following solutions were used for the benchmark:

- FastText: library for efficient learning of word representations and sentence classification created by Facebook’s AI Research lab.

- Ludwig: a toolbox that allows to train and test deep learning models without the need to write code, using the command line or the programmatic API. The user just has to provide a CSV file (or a pandas DataFrame with the programmatic API) containing his/her data, a list of columns to use as inputs, and a list of columns to use as outputs, Ludwig will do the rest.

- Logistic regression with spaCy preprocessing: classic logistic regression using scikit-learn library with custom preprocessing using spaCy library (tokenization, lemmatization, removing stopwords).

- BERT with spaCy pipeline: spaCy model pipelines that wrap Hugging Face’s transformers package to access state-of-the-art transformer architectures such as BERT easily.

- LUIS: Microsoft cloud-based API service that applies custom machine-learning intelligence to a user’s conversational, natural language text to predict intent and entities.

- Flair: a framework for state-of-the-art NLP for several tasks such as named entity recognition (NER), part-of-speech tagging (PoS), sense disambiguation and classification.

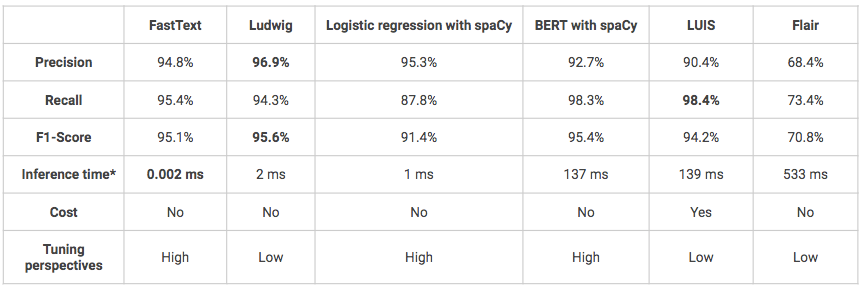

The following models have all been trained and tested on the same datasets: 1600 utterances for training, 400 for testing. Models have not been fine-tuned therefore some of them could potentially have better performances than what is presented below.

*Inference time on local Macbook Air (1.6GHz dual-core Intel Core i5–8 Go 1600 MHz DDR3 RAM).

- Overall, in terms of performance, all the solutions achieve good or even very good results (F1-score > 70%).

- One of the inconveniences of Ludwig and LUIS is that they are very “black-box” models which make them more difficult to understand and fine-tune.

- LUIS is the only solution tested that is not open source thus it is much more expensive. In addition, the use of its Python API can be complex since it has been initially designed to be used via a click-button interface. However, it can be a solution to prefer if you are in the context of a project that aims to go into production and whose infrastructure is built on Azure for example, the integration of the model will then be easier.

Entities extraction

The goal is to be able to locate specific words and classify them correctly into predefined categories. Indeed, one you have detected what your customer would like to do, you may need to find further information in his/her request. For instance, if a client wants to buy something you may want to know which product it is, in which colour or if a client wants to return a product, you may want to know at which date or which store the purchase was made. In our case, we had defined 16 custom entities: 9 product-related entities (name, colour, type, material, size, …) and additional entities related to geography and time. As for intent detection, several solutions have been used to make a benchmark:

- spaCy: an open-source library for advanced Natural Language Processing in Python that provides different features including Named Entity Recognition.

- LUIS: see above

- Ludwig: see above

- Flair: see above

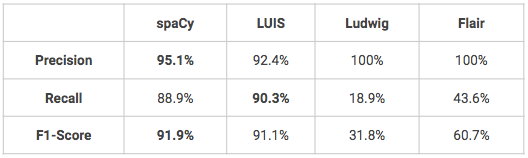

The following models have all been trained and tested on the same datasets: 1600 utterances for training, 400 for testing. Models have not been fine-tuned therefore some of them could potentially have better performances than what is presented below.

- Two models perform really well on custom named-entity recognition, spaCy and LUIS. Ludwig and Flair would require some fine-tuning to obtain better results, especially in terms of recall.

- One advantage of LUIS is that the user can leverage some advanced features for entity recognition such as descriptors which provides hints that certain words and phrases are part of an entity domain vocabulary (e.g.: color vocabulary = black, white, red, blue, navy, green).

Conclusion

Among the solutions tested on our call centre dataset, whether for intention detection or entity recognition, none stands out in terms of performance. In our experience, the choice of one solution over another should therefore be based on their practicality and according to your specific use case (do you already use Azure, do you prefer to have more freedom to fine-tune your models…). As a reminder, we just took the libraries as they are to produce this benchmark, without fine-tuning the models, so the results displayed are to be taken with slight hindsight and could vary on a different use case or with more training data.

博客

博客